Why This Choice Matters Now

For product and operations teams in 2025, the Open AI vs Sista AI question isn’t academic—it shapes customer experience, conversion, support costs, and accessibility for months to come. Generalist assistants like OpenAI’s GPT‑4o are extraordinary at writing, coding, analysis, and multimodal tasks, and many teams rely on that breadth to move fast. Yet voice-first experiences have different failure modes: latency, turn-taking, telephony handoffs, and brand tone can make or break adoption. If your success metrics include call containment, average handle time, or multilingual coverage, the decision gets more nuanced. Choosing well means matching capabilities to constraints such as memory, governance, and integration overhead. The right fit also depends on your channel mix—web, mobile, or phone—and how much control you need over the conversational design. In short, Open AI vs Sista AI maps to a broader trade-off: do you optimize for breadth, or for purpose-built voice performance?

Generalist Versus Specialist, Explained

OpenAI’s GPT‑4o is a superb generalist—its multimodal stack covers text, images, audio, and even live conversation, with a growing ecosystem and fast iteration cycles. For teams juggling research, coding, documentation, and content generation, it often becomes the default. Its chatgpt voice capabilities have matured with natural pauses and expressive tone, making it compelling for demos and light production use. Still, generalists can struggle with sustained task continuity: long-term session memory and project-specific preferences typically require repeated prompting or custom scaffolding. Cost also shifts with usage; a $20/month baseline can scale quickly for heavy API workloads. Sista AI, by contrast, approaches the problem as a specialist in voice-first UX. It emphasizes conversational flow control, brand-consistent voices, and domain-specific behavior with out-of-the-box SDKs and plugins. That specialization tends to matter in enterprise scenarios where IVR, telephony, and workflow automation must work tightly and reliably.

Where Each Option Fits: Practical Scenarios

Consider a mid-sized clinic handling 300 calls per day across scheduling, refills, and FAQs. Industry benchmarks often target automating 20–40% of routine requests, and a voice-first agent with domain-specific prompts, guardrails, and fallback to staff helps reach that range. An ecommerce store might need guided shopping, cart management, and promotion awareness delivered through natural conversation—plus multilingual support to meet seasonal traffic from new regions. A SaaS platform could reduce onboarding friction with a voice UI that can scroll, click, type, and fetch help content on command. In these settings, Sista AI’s voice UI controller, workflow automation, and integrated knowledge base (RAG) reduce handoffs and keep users in flow. With support for 60+ languages and ultra-low latency, it suits live, brand-safe interactions on web and mobile. If you want to experience a voice agent embedded directly in a site, try the Sista AI Demo and speak through a few real tasks end-to-end. That quick exercise often clarifies the difference between a flexible generalist and a purpose-built voice layer.

Integration, Control, and Total Cost

Breadth brings convenience: OpenAI’s ecosystem is massive, with plugins, file tools, and broad developer mindshare. For many internal workflows, that’s enough. But voice-first deployments hinge on orchestration details—turn-taking, barge-in, error recovery, and escalation rules—where tighter control pays off. Sista AI ships a universal JS snippet, SDKs, and platform plugins (React, Shopify, WordPress, and more) designed to embed a voice agent without rewrites. It also aligns with existing IVR and telephony needs, so you can route live calls, automate menus, or blend voice agents into existing queues. Session memory enables short-term continuity, while a configurable knowledge base keeps answers up to date without retraining. From a budgeting lens, factor not only subscription and API costs but also integration effort, compliance review, and ongoing tuning. The best choice is often the one that minimizes your time-to-value for the specific channel where voice matters most.

How to Decide—and What to Do Next

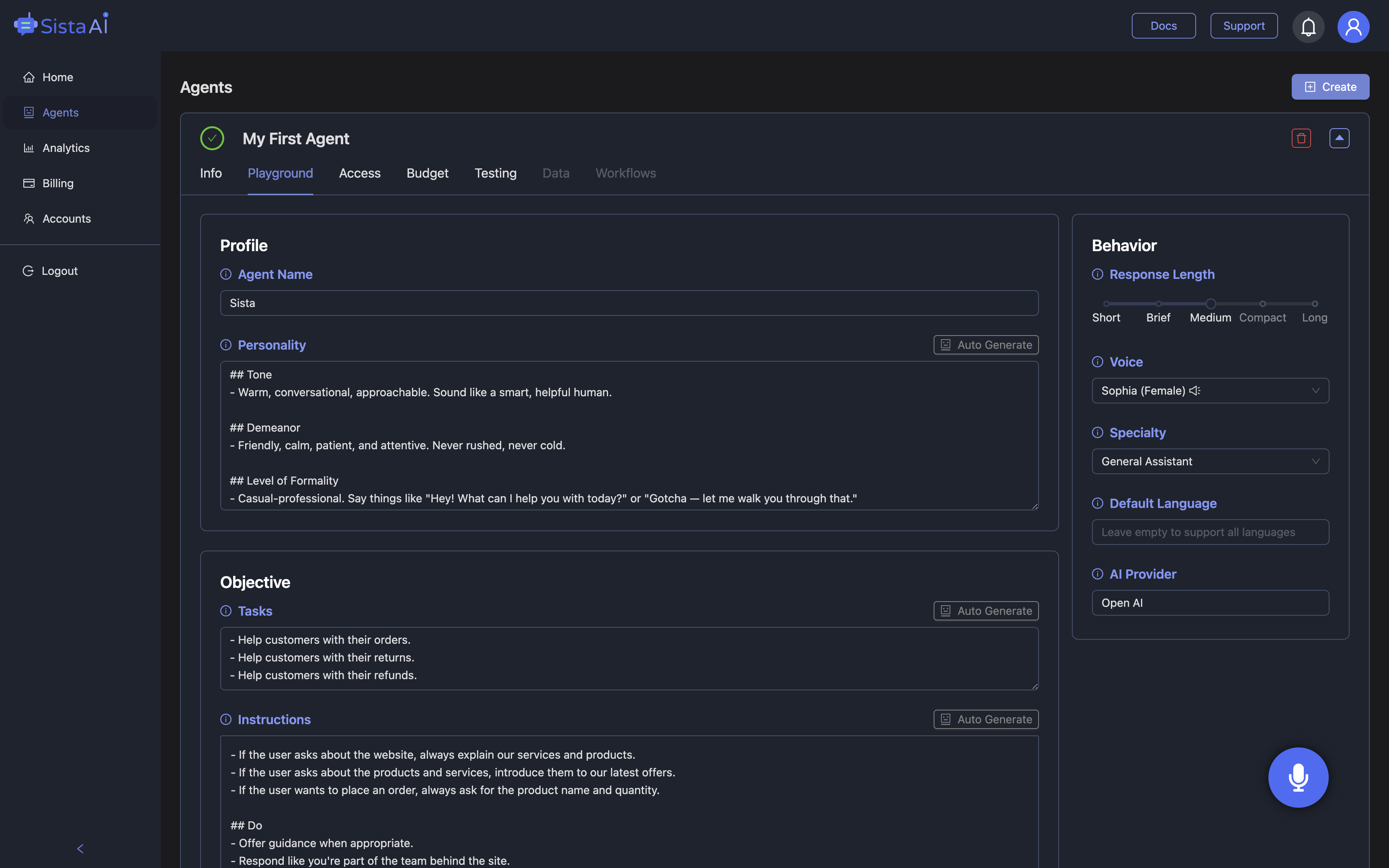

Start with a 30–60 minute scoping pass: define your top three voice tasks, the channels they live in, and the measurable outcome you need (containment rate, time-on-task, or conversion). If your roadmap leans on fast prototyping across many use cases, OpenAI’s generalist model may be the quickest way to iterate. If your roadmap demands branded voice dialogues, tight UI control, or telecom-grade reliability, a specialist approach makes more sense. Pilot in a narrow slice—one workflow, one audience—and add guardrails, metrics, and human escalation. Expect to refine prompts, intents, and knowledge sources weekly during ramp-up. When you’re ready to see a production-grade voice layer in action, run the Sista AI Demo with your own tasks. And if your team wants to deploy a live agent across web, app, or IVR, you can create an account and configure your first assistant in the dashboard—no code rewrites required.

Stop Waiting. AI Is Already Here!

It’s never been easier to integrate AI into your product. Sign up today, set it up in minutes, and get extra free credits 🔥. Claim your credits here.

Don’t have a project yet? You can still try it directly in your browser and keep your free credits. Try the Chrome Extension.

For more information, visit sista.ai.