The Future of AI Voice Interfaces Starts With Human-Like Speech

The future of AI voice interfaces is arriving faster than many expected, and the shift is measurable in both quality and scale. By the end of 2024, roughly 8.4 billion voice assistant devices were in use globally, more than the world’s population, signaling multi-device habits and the spread of IoT. Meanwhile, about 20.5% of internet users now rely on voice search, a steady uptick from previous years that reflects growing comfort with conversational inputs. On the quality side, modern voice generators and APIs produce remarkably lifelike speech, enabling e-learning narration, accessible media, and AI assistants that convey emotional nuance. Tools like Murf AI and Speechify exemplify this progress, transforming text into engaging audio across dozens of languages for creators and students. Real-time systems from PlayHT and ElevenLabs add emotional expression and multi-language support, making voice apps feel personable, not mechanical. As “chatgpt voice” becomes a common search and capability, more teams are exploring multimodal assistants that can talk, see, and act. In this context, Sista AI focuses on the practical layer: plug-and-play voice agents that embed directly in websites and apps, so teams can benefit from this wave without rebuilding their stacks.

Why Market Momentum Matters for Builders and Brands

The future of AI voice interfaces is not just a tech story; it’s a business story backed by adoption and revenue. In the United States alone, about 153.5 million people use voice assistants in 2025, with Google Assistant projected near 92 million users and Siri serving roughly 86.5 million. Voice-driven commerce is material, too: an estimated 38.8 million Americans use smart speakers to shop, influencing product discovery and repeat orders. On the enterprise side, the virtual assistant market rose from about $6.37 billion in 2024 to an expected $8.17 billion in 2025, a robust 28.2% annual increase. Major platforms are investing accordingly: Amazon Alexa generated around $10.5 billion in 2024, while Apple’s services business, which includes Siri advances, contributed roughly $70 billion. Next-gen assistants are becoming more capable, as seen with Alexa+ subscriptions that bundle advanced conversational AI and automated task handling. Apple’s “Apple Intelligence” and on-screen awareness help Siri understand context, handle complex queries, and even tap external models when helpful. For teams planning roadmaps, these signals suggest voice will be a primary interface layer across homes, cars, and software—one reason Sista AI pairs voice automation with UI control to move from talk to tangible actions.

From Recognition to Reasoning: What “Good” Looks Like in 2025

Historically, voice systems recognized words; now they manage multi-turn conversations, maintain context, and perform tasks across apps. The future of AI voice interfaces is defined by generative AI, neural speech synthesis, and real-time understanding that feels natural. Contact centers show what this unlocks: leading voice AI providers report up to 85% reliability while handling 150 concurrent calls with sub-2-second response times. In practice, that can route common inquiries instantly, freeing human agents for edge cases and empathy-heavy issues. Consider three scenarios. First, a retail brand uses a voice agent to capture intent, check stock, and push a personalized cart—turning browsing into frictionless buying. Second, a clinic triages symptoms, schedules appointments, and shares pre-visit instructions, improving throughput without sacrificing care. Third, a B2B SaaS guides onboarding verbally, executing in-product steps on command and reducing time-to-value. As users search for “chatgpt voice” features, they increasingly expect these assistants to read screens, parse images, and chain tasks, not just transcribe speech. Sista AI’s approach addresses that expectation by blending conversational intelligence with automation and screen awareness, keeping latency low so interactions feel human.

A Practical Playbook for Deploying Voice—Without the Growing Pains

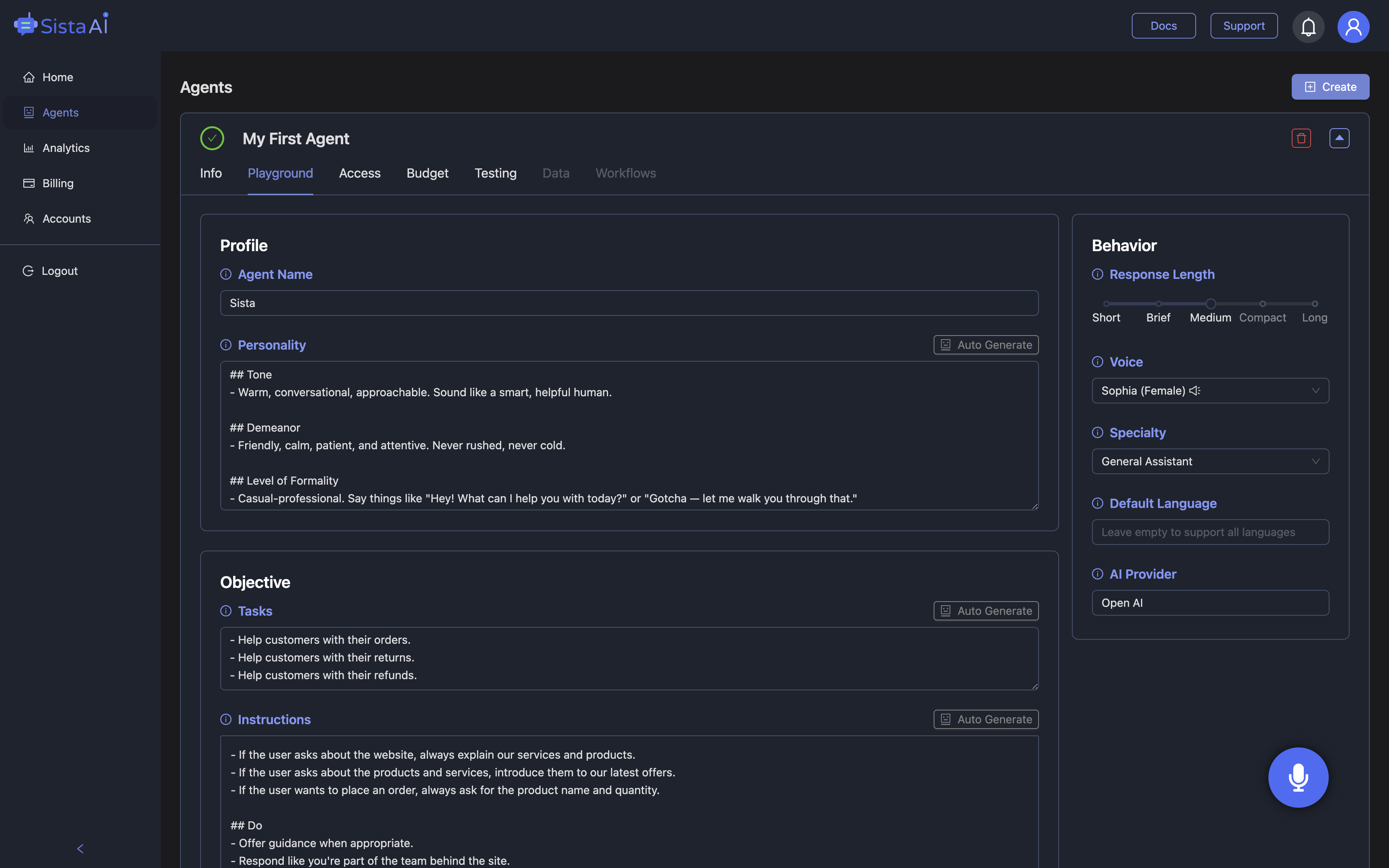

Teams exploring the future of AI voice interfaces should start with a narrow, high-impact domain: two to five intents that resolve common user needs. Define success metrics (CSAT, conversion rate, AHT reduction), choose a voice profile that matches your brand, and set a latency budget under two seconds for live conversations. Opt for providers and APIs that support multilingual use, emotional tone control, and real-time streaming to avoid robotic pauses. Build safety nets: confidence thresholds, handoff to chat or human agents, and clear consent for data usage to meet GDPR and accessibility standards. Instrument everything—call outcomes, interruption patterns, fallback rates—and use A/B tests to tune prompts, dialog flows, and voices. Finally, integrate action: a voice that can read the screen, click buttons, fill forms, and call APIs elevates delight into delivery. This is where Sista AI is intentionally opinionated: its embeddable agent adds voice UI control (scroll, click, type), workflow automation, session memory, and integrated RAG over your knowledge base, all with ultra-low latency. You can try a live example in minutes via the Sista AI Demo, then tune permissions and behaviors from a no-code dashboard.

How Sista AI Turns Conversations into Completed Tasks

Because the future of AI voice interfaces is cross-platform, Sista AI ships as SDKs, universal JS snippets, and plugins for stacks like React, Shopify, and WordPress—no rewrites, just a script key. For e-commerce, the Shopify AI Sales Agent handles product discovery by voice, compares variants, manages carts, and assists checkout—useful when 13.6% of Americans already shop via smart speakers. In support, a voice agent can authenticate a user, summarize on-screen policy pages, and execute account updates—mirroring the contact center benchmarks of sub-2-second response and scalable concurrency. In education, Sista AI narrates lessons, answers context-aware questions, and logs progress so learners retain more in less time. For accessibility, the built-in screen reader summarizes pages and performs actions for users who prefer or require voice-first navigation. Technical teams can go deeper with full-stack code execution, calling internal APIs to, say, fetch inventory or trigger a refund workflow. If you are mapping a broader AI transformation, Sista AI’s consultancy helps define voice personas, select the right TTS/STT stack, and design safe, compliant flows; when you’re ready, you can create an account and roll out your first production agent.

Bottom Line: Voice Is Becoming the Interface, Not Just a Feature

The data is unambiguous: billions of devices, tens of millions of voice shoppers, and rapid market growth are pushing conversation to the front door of digital experiences. The future of AI voice interfaces blends realistic speech, context retention, and automated action, so users feel heard and helped in real time. Builders who pair lifelike voices with reliable execution—screen control, API calls, and knowledge-grounded answers—will see measurable gains in conversion, retention, and support efficiency. If you want a quick, low-risk way to evaluate this, spin up a proof of concept with the Sista AI Demo and simulate a real customer journey from greeting to completed task. When the flow feels right, you can sign up and deploy across your site or app with a few lines of code. Thoughtfully designed voice agents aren’t a novelty anymore—they’re the new, human way your product gets things done.

Special Offer

Get free credits — Start with Sista AI

Just want to try it? Use the Browser Extension

For more information, visit sista.ai.