The new shape of AI

AI is entering a mature, practical phase where breakthroughs are arriving together rather than in isolation. In just one month, the field highlighted clearer model interpretability, omnilingual speech understanding, and agentic systems that plan and act—signals that the era of simple chatbots is over. The momentum is visible in everyday experiences, from chatgpt voice interactions to embedded assistants that navigate apps for you. For product teams, this means AI is no longer a bolt-on feature but a core interaction layer that must be safe, transparent, and multilingual by default. The upside is substantial: faster support, richer onboarding, and more inclusive access. Yet the bar for reliability is higher too. Success now depends on pairing advanced models with thoughtful UX and guardrails. That’s where platform choices—and integrations—matter as much as algorithms.

Interpretability and control move center stage

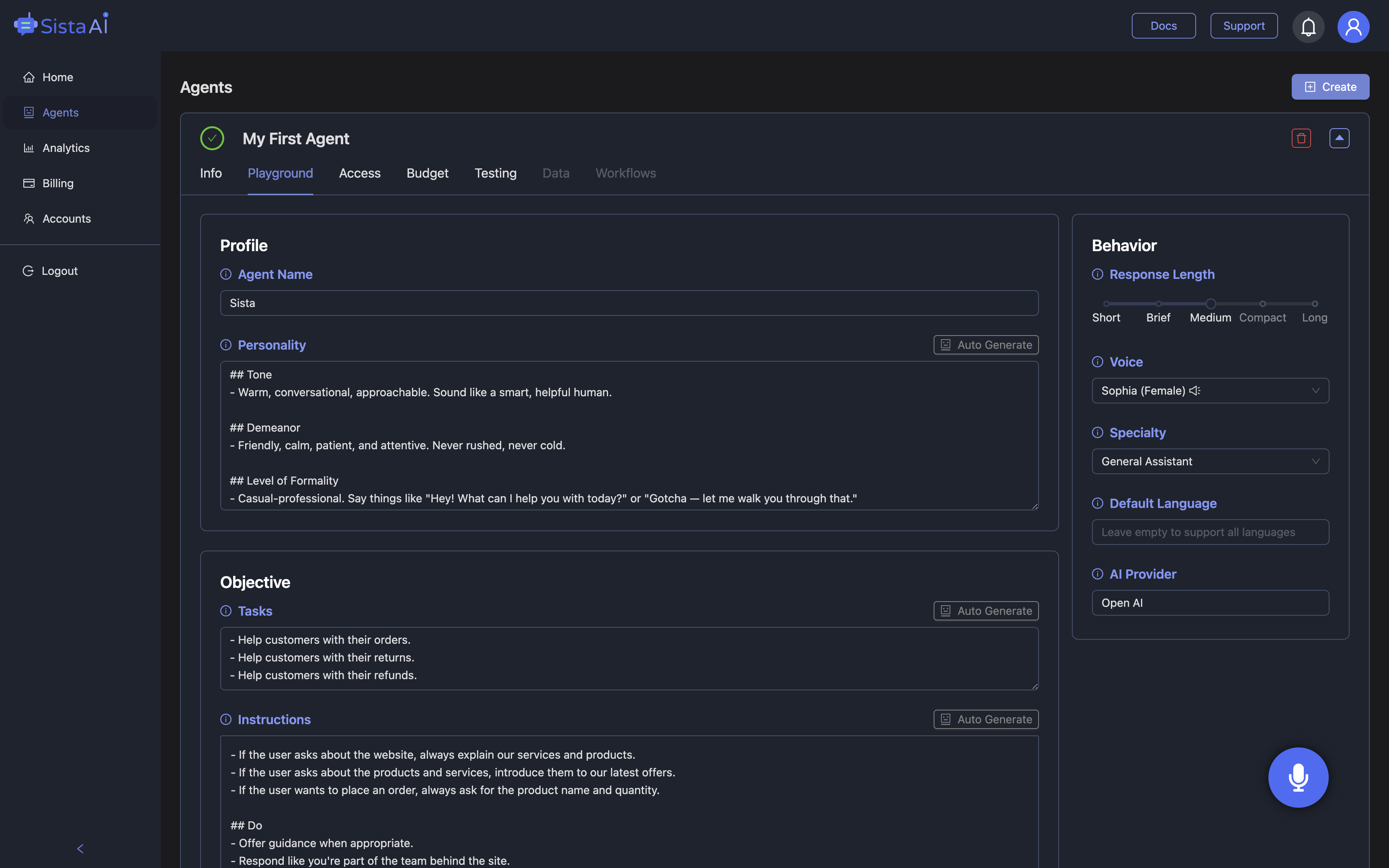

Developers increasingly demand systems they can diagnose and shape, not just prompt. Recent work on sparse architectures makes debugging large models more tractable, helping teams localize errors and tune behavior before issues reach production. Complementing that, research mapping distinct “memory” and “reasoning” regions in model internals suggests new ways to isolate factual recall from logical inference. In practice, this can mean fewer hallucinations when the system should simply fetch known facts, and more robust reasoning when it must plan actions. Imagine a product team triaging a bug where an assistant misreads a pricing policy: isolating the memory route simplifies the fix. Pair that with retrieval-augmented grounding and audit logs, and you have a safer path to scale. Sista AI leans into this reality with session memory, integrated knowledge bases, and a no-code dashboard to configure voice agents for specific tasks and boundaries without rewriting your stack.

Voice goes global—and real time

Voice is becoming the most inclusive interface for AI, aided by massive ASR progress and edge-grade assistants. An open-source automatic speech recognition model now spans over 1,600 languages and dialects, widening access for communities previously left behind. In parallel, reports of trillion-parameter assistants running on consumer devices point to faster, more context-aware, and privacy-preserving interactions. Consider a marketplace that serves buyers across regions: a voice agent that understands local dialects, responds instantly, and respects user settings can unlock self-service at any hour. Sista AI complements this trend with multilingual recognition in 60+ languages, ultra-low-latency responses, and a voice UI controller that can scroll, click, type, and navigate on behalf of the user. Teams can embed it via a universal JS snippet or React/Shopify/WordPress plugins and test live in minutes using the Sista AI Demo. The result is a voice layer that feels native, not bolted on.

From assistants to agents that execute

The shift from passive chat to agentic AI is well underway. New model upgrades emphasize controllability and warmth in conversation, while group-agent tools coordinate planning and action across steps and systems. Enterprise stacks are consolidating around unified suites that connect productivity, security, and knowledge—reducing the friction of scattered bots. Practically, this looks like a support flow where an agent listens, verifies identity, updates a ticket, and triggers a refund—without bouncing the customer between tabs. If a support queue receives 1,000 tickets daily and an agent can resolve even 30% end-to-end, that’s 300 fewer handoffs and a tighter feedback loop for quality. For commerce, guided shopping via voice can filter collections, compare SKUs, and manage carts hands-free. Sista AI’s workflow automation and full-stack code execution let teams script these multi-step flows, while its Shopify-specific agent handles voice-based discovery and checkout. And for research-heavy users, the browser extension adds on-page summarization and chatgpt voice-style Q&A directly over live content.

Safety, infrastructure, and a practical path forward

As capability scales, so does responsibility. Governments are updating guardrails to tackle deepfake fraud and harmful misuse, while cloud providers and labs are plowing billions into compute to keep real-time services reliable. For product owners, the implication is straightforward: instrument your agents with permissions, logs, and clear fallbacks; ground responses in vetted sources; and plan for multilingual, accessibility-first experiences. A simple sanity check helps: can your voice agent justify answers, decline risky requests, and hand off gracefully to humans? Sista AI was built with these realities in mind—configurable permissions, integrated RAG, and a no-code dashboard make it easier to deploy responsibly across sites and apps. If you’re exploring a voice-first layer for your product, try a live conversation in the Sista AI Demo to see real-time understanding and UI control. When you’re ready to pilot, you can sign up and ship an embedded voice agent without rewiring your codebase.

Stop Waiting. AI Is Already Here!

It’s never been easier to integrate AI into your product. Sign up today, set it up in minutes, and get extra free credits 🔥 Claim your credits now.

Don’t have a project yet? You can still try it directly in your browser and keep your free credits. Try the Chrome Extension.

For more information, visit sista.ai.